Thinking takes time

As AI becomes integrated into our workflows, we are constantly faced with choices: use it to replace thought or to sharpen it. There are many instances where outboarding decision-making is a good answer, but innovation requires the discipline to analyse, argue, and judge rather than simply automating responses.

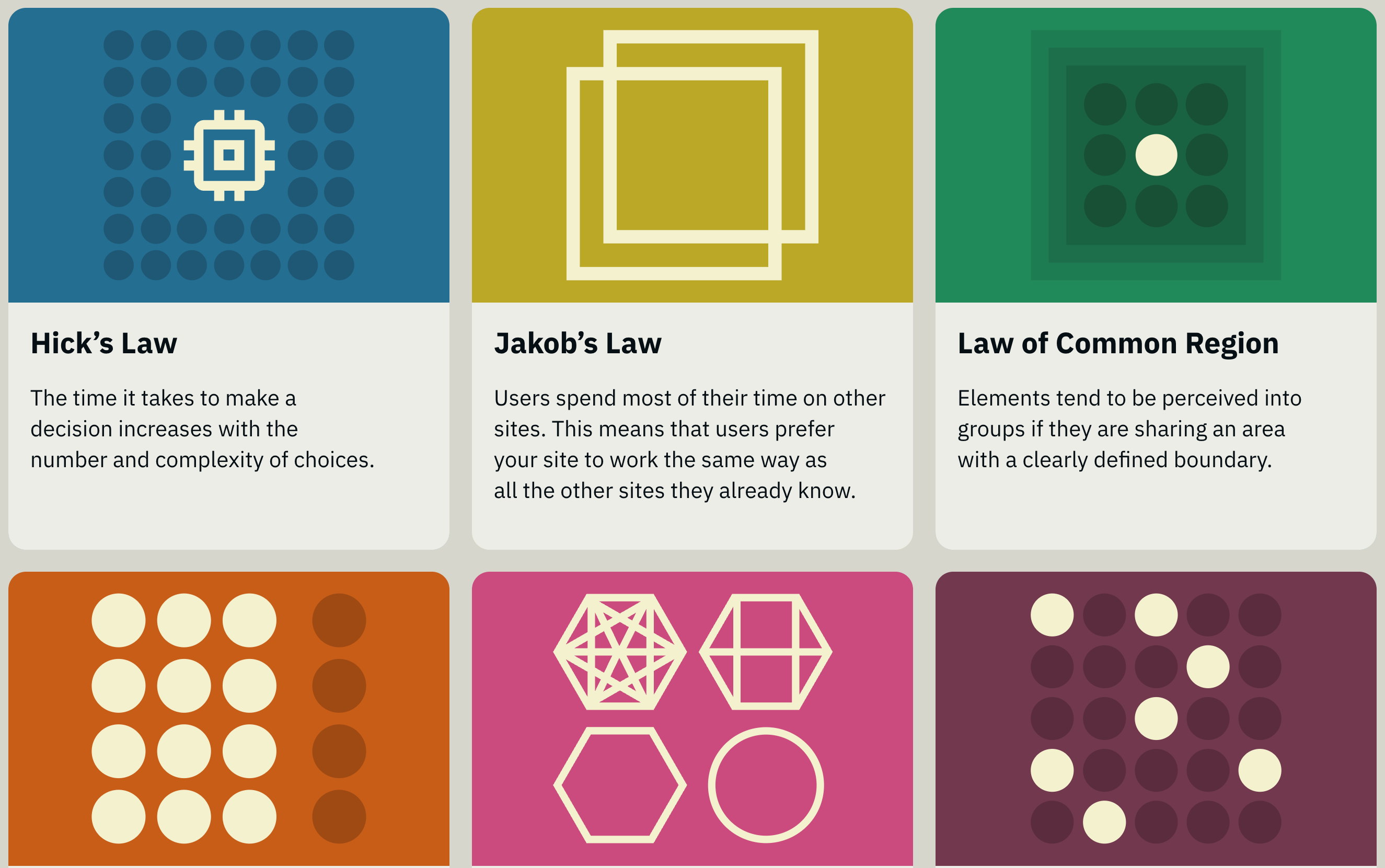

People tend to be lazy. The whole discipline of User Interface design is based on that insight. People take the path of least resistance. The excellent lawsofux.com catalogues the evolving ways to harness laziness.

Inherent laziness always tempts us to favour conclusions over doing the work of thinking. However, complex problem-solving requires high tolerance for ambiguity and deep immersion over time - it requires structured thought. It requires writing.

Writing is not just documentation; it’s the process by which we think things through. It helps us to test claims, identify weaknesses, and refine logic. This is especially true of code. Code either works or it doesn’t.

We’re seeing such vast changes in how code is produced. Perhaps 80–90% of code at Tui is now written by machines. This is doing a number of things. It’s taking away a lot of the drudgery of writing boilerplate code, it’s also producing a certain melancholia because language, writing and thinking are entwined and good developers love to think.

Large Language Models automate writing and this combines with our laziness, to make reading and writing appear as optional. Writing is seen as time consuming and indulgent, argument as aggression, and sustained thought as a kind of social offence. The moment anything becomes difficult, extended, or conceptually demanding, the pressure is to shorten it, soften it, operationalise it, or dismiss it as waffle.

A knowledge company that does not value reading, writing, and argument is a fraud. It may still produce deliverables. It may still sell stuff. It may still wrap itself in the language of insight, strategy, and learning. But that is not intelligence, it’s the performance of intelligence.

Writing is thinking. People who do not write flatter themselves that they think perfectly well without it. Usually what they mean is that they have reactions, preferences, prejudices, and social instincts which they mistake for thought because they have never had to test them

That is why anti-writing cultures are always anti-thinking cultures. If you never have to write something down in a form that can be analysed over time, you can go on indefinitely mistaking impulse for judgment.

Machines can help us think. They can red team arguments. They can scout for weaknesses. They’re increasingly useful in what used to be known as Quality Assurance, but there are a few problems with these new tools; they lack independence and are inherently sycophantic, but the one I want to focus on is how they tend to remove writing and in doing so remove thinking.

We are living through an era of fake thinking. People prefer not to read, which means they don’t pay attention to an idea for long enough to understand it. Refusing to read or write drains your attention span, tolerance for complexity, ability to hold contradictions, and capacity for nuance. Before you can solve any complex problem you need to be capable of understanding it

AI delivers conclusions without requiring thought. Long-form content (like this) requires people to think. Most people walk around with opinions they’ve never thought through. They feel like they believe something, but they’ve never tried to write it down in a way that would survive scrutiny.

Good judgment is formed in friction: contradiction, failed formulations, boredom, embarrassment, delay, wrong turns, the slow realisation that what sounded clever in your head is weak, derivative, incoherent or false. You do not get judgment by pressing a button and receiving a competent paragraph. You get it by staying with a problem long enough for its shape to emerge.

We need to analyse, argue and judge. Former General James Mattis has a wonderfully descriptive metaphor for judgement…

”Clausewitz observed that most intelligence is false, that reports contradict each other. The commander who has worked through this learns to see the way an eye adjusts to darkness, not by getting better light but by staying long enough to use what light there is. This ‘staying’ is what takes time. Compress the time and the friction does not disappear. You just stop noticing it.”

(Discovered in an excellent Guardian article. It’s appropriate that the least thinking President fired Mattis, or at least Mattis quit in protest: a classic case of “You can’t quit, you’re fired.”

We lean on AI to confront our doubts. It promises reassurance, certainty, and validation in situations where our intuition falters. We reach for our phones to verify things we probably already know, seeking confirmation for even the simplest decisions. We’re training ourselves for incapacity.

A lot of the world prefers speed, atmosphere management, and social legibility to thinking. It prefers vague gestures to explicit claims. It prefers emotional policing to argument. Substance is constantly displaced into complaints about tone, professionalism, respect, and manner. On the one hand these emotional responses are the capillary action of all illegitimate authority, on the other they are the basis of civil society.

When writing is reduced to output, discussion can be reduced to vibes and argument reduced to interpersonal tension. People hear challenge as hostility. There is social investment in relentless agreement and a managerial relationship to language.

Using AI to bypass friction leads to a “feedback loop of deskilling,” where judgment and attention spans are progressively weakened. But use it instead to confront friction and it can actually strengthen our thinking. It can help us to stay with problems long enough for their shape to emerge, and to develop better judgment.

Use it as a difficult interlocutor, not a source of answers. Use it to stress-test assumptions, generate counter-arguments, and surface blind spots. Formulate ideas first. By all means use AI to brainstorm but stick with it until really new ideas are generated through your interactions. Use AI to challenge those ideas, not to replace the act of generating them.