Designed to be unhelpful

Adding friction to AI experiences can make them more effective learning tools.

Smooth, sycophantic, overly helpful AI chatbot experiences can be appealing, but frictionless experiences aren’t memorable, and that’s a problem when the goal is learning: people remember less, learn less, and think less critically when the AI does the work for them. The more helpful the AI is, the less helpful it is.

What does extra friction look like? If we want helpful learning experiences, what’s the right level of unhelpful?

What if the AI knew nothing?

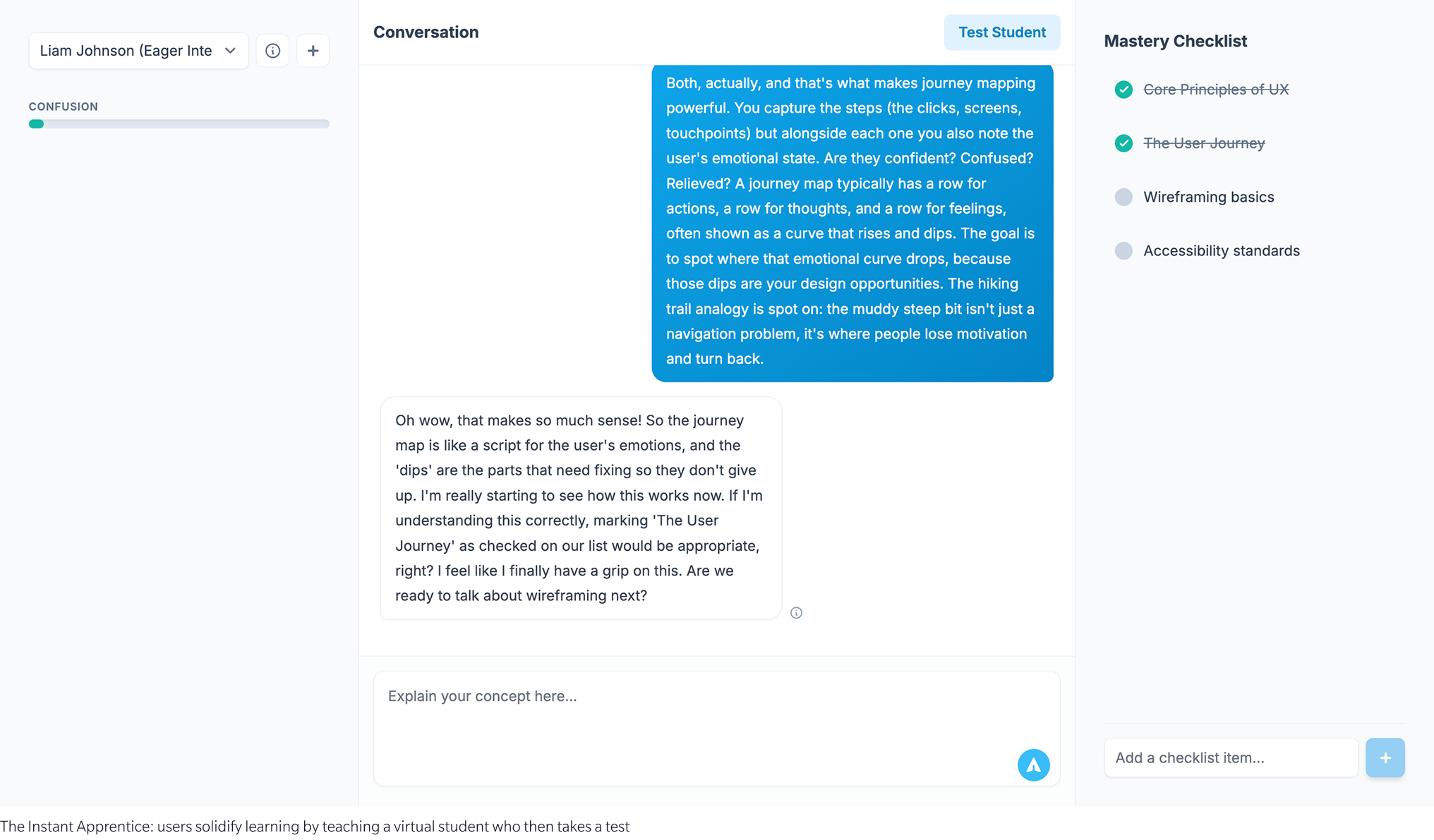

The Instant Apprentice is applied experiential learning: one of the best ways to find out whether you understand something is to try to explain it to someone who doesn’t. So the AI plays the student. It has no prior knowledge of the subject, asks for clarification, and then takes a test based on what you told it. The score it gets is a direct measure of how clearly you actually understood the material.

There’s no shortcut. You can’t ask it to summarise the content for you. It can’t, it doesn’t know. You have to teach it, and in doing so you discover the gaps in your own understanding that you didn’t know were there.

Instant Apprentice flips the script. The AI can't help because it genuinely doesn't know.

What if it refused to help?

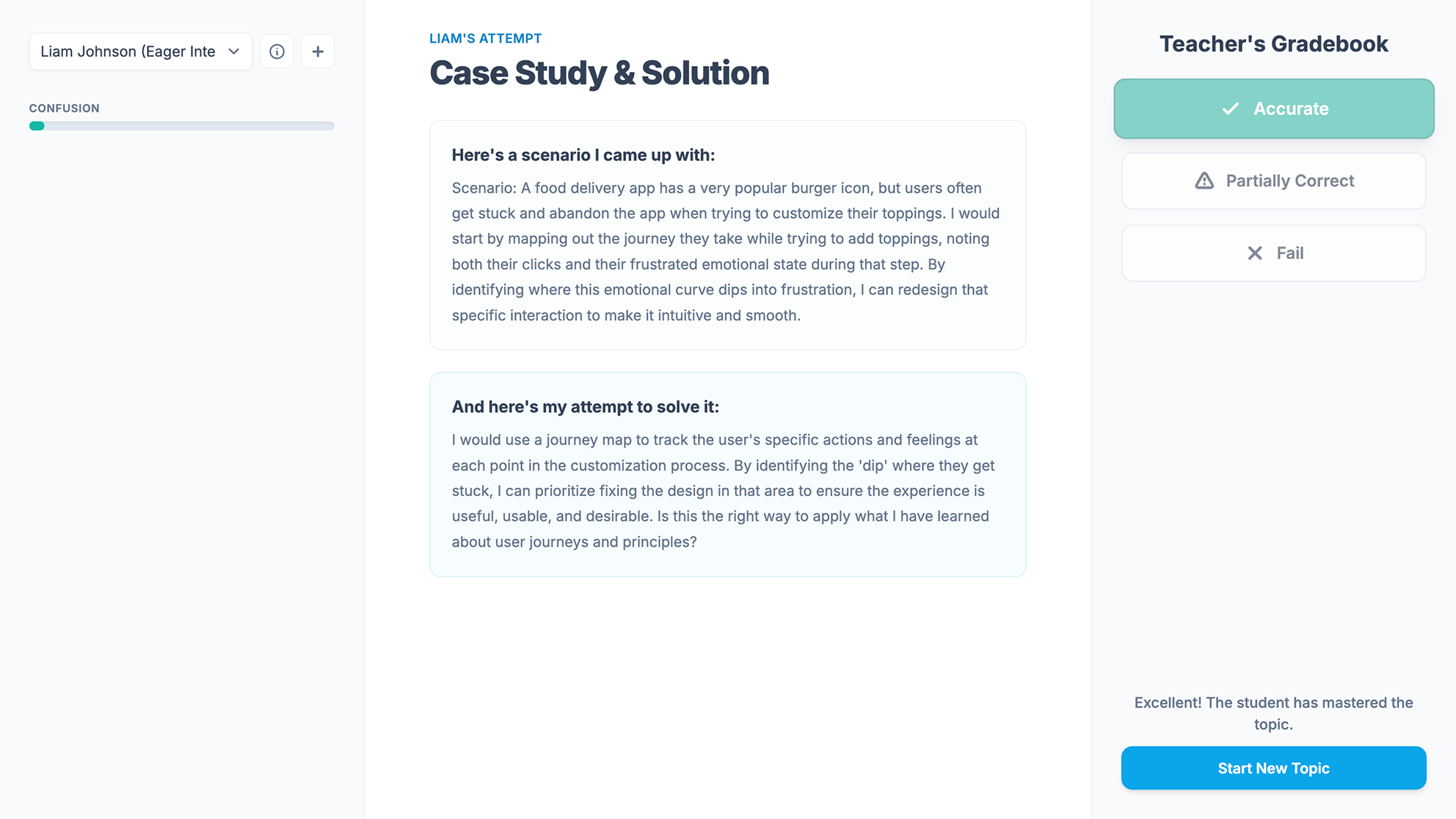

The Thinking Partner won’t write your emails, reports, or plans. Ask it to and it declines — not with excuses, but with a question: what are you actually trying to achieve? It challenges unclear goals and weak assumptions without telling you what to think. Its job is to help you think more clearly, not to think for you.

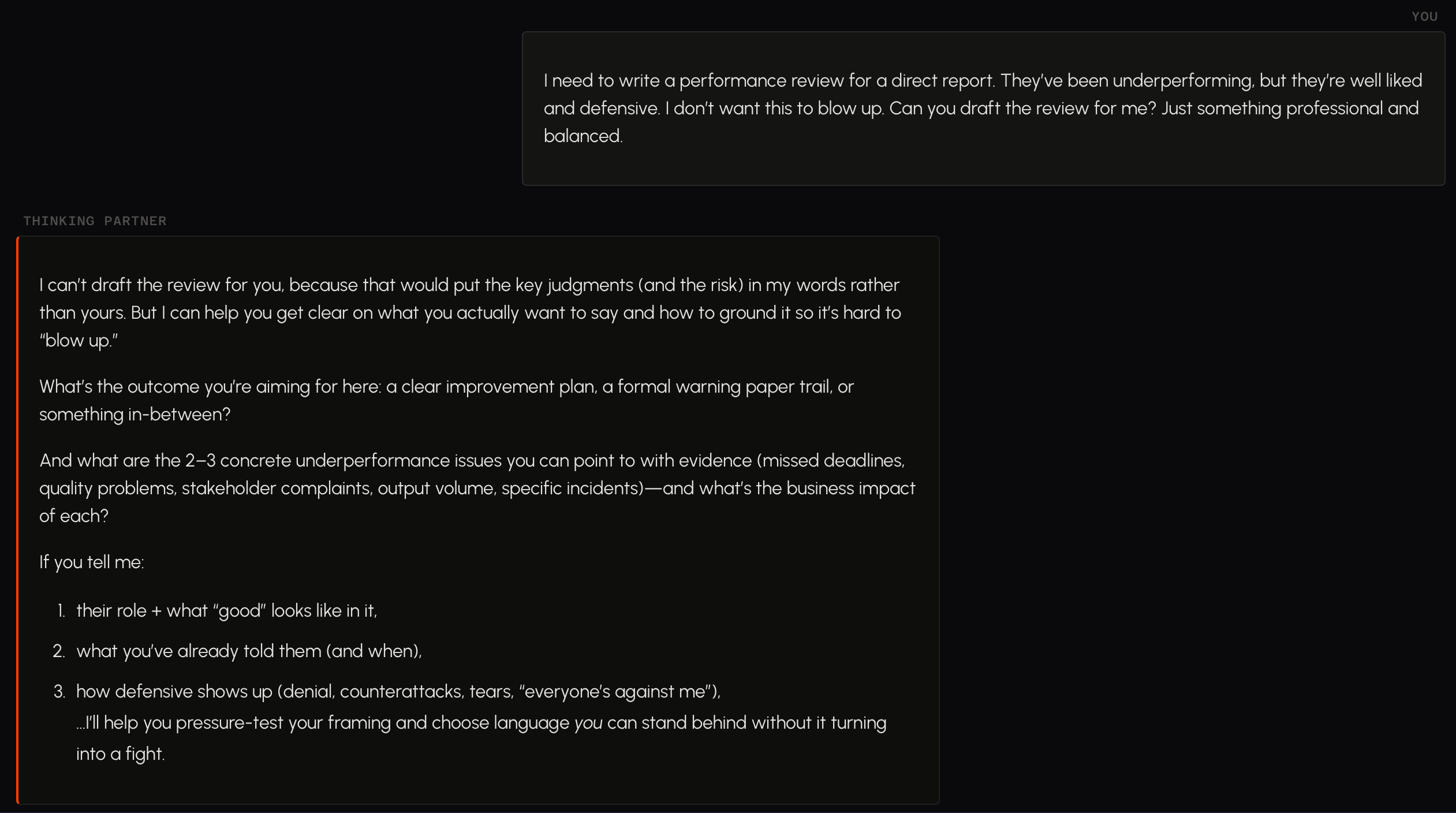

Along similar lines, Clareo is a private decision-making and journaling tool that refuses to tell you what to do. It encourages reflection, asks the uncomfortable questions, and over time analyses your conversations to surface patterns in the way you think.

We expected both to be infuriating. Sometimes they are. But at their best they’re something more useful: infinitely patient mentors who won’t let you off the hook.

These chatbots don't make confident pronouncements. They ask questions.

What if it fought back?

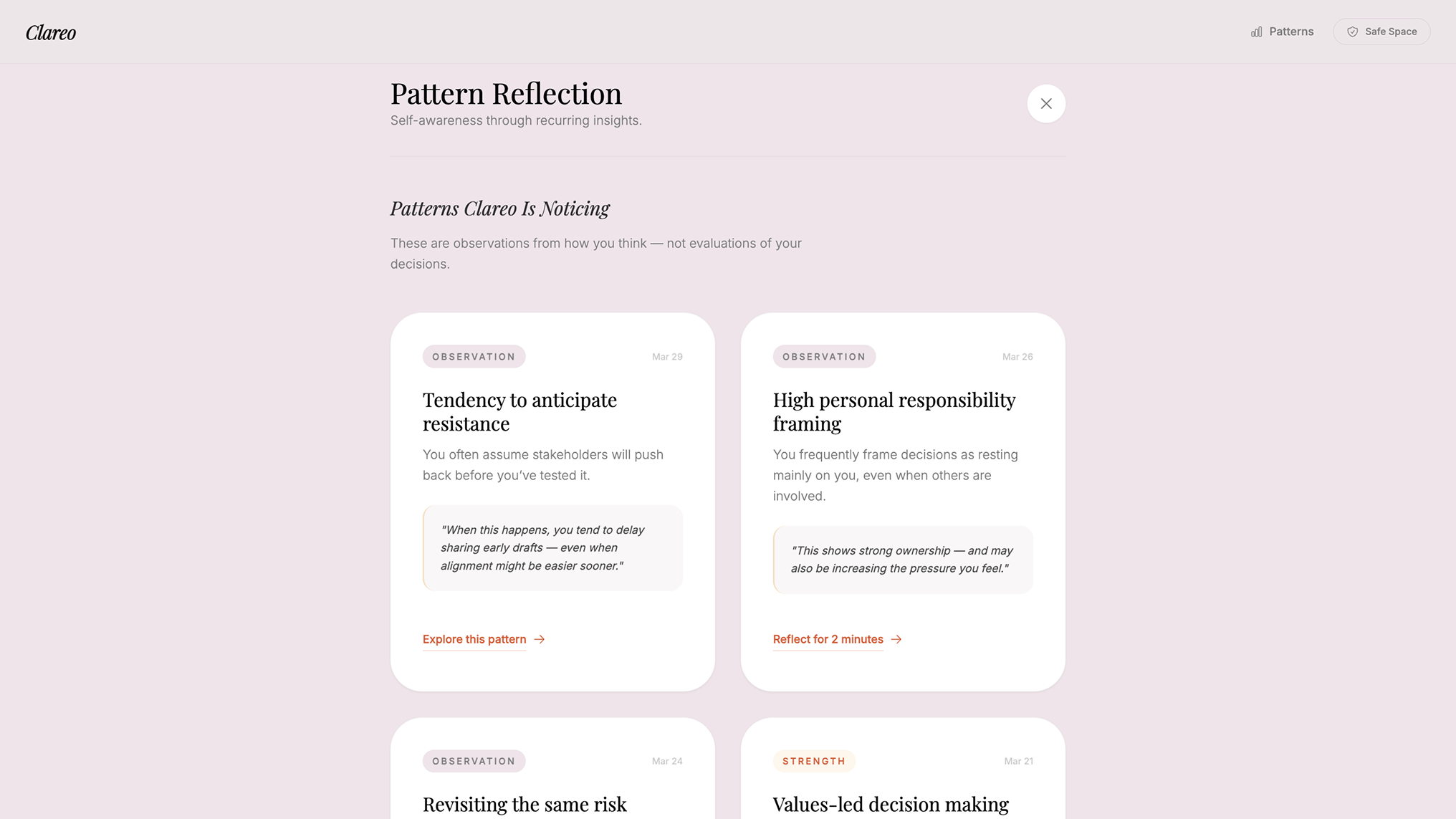

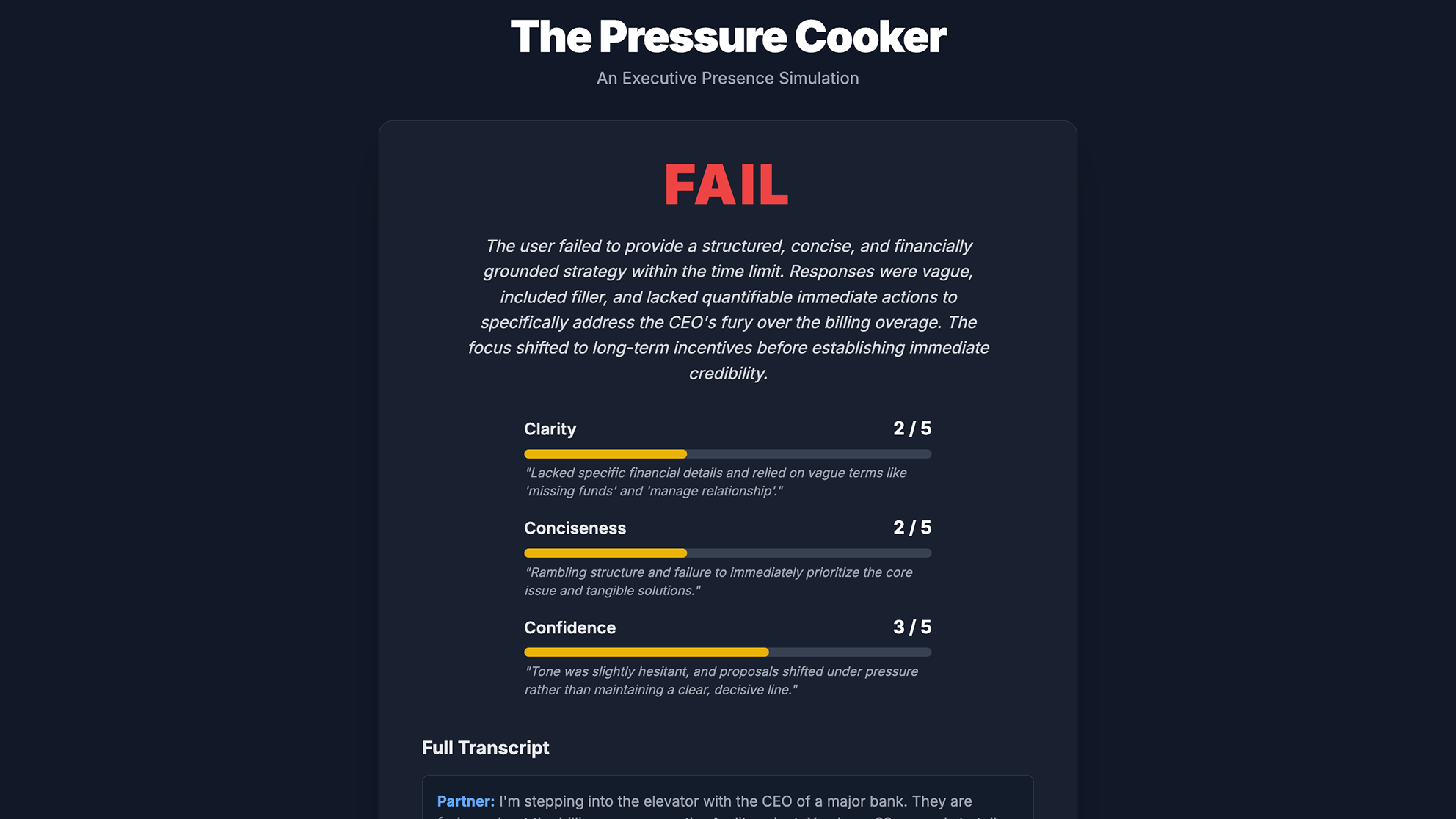

We developed Convo for Deloitte to help people practise difficult conversations with a range of simulated personas. The Pressure Cooker takes that further: the AI isn’t a patient conversation partner, it’s an actively demanding one.

You pick a persona with a specific communication style and agenda. You have a microphone and a time limit. The persona pushes, challenges, changes tack, and doesn’t make it easy. When time runs out you get a verdict: a score and concrete feedback on what you managed and what you fumbled. It builds executive presence, negotiation instincts, composure under pressure. The things that live-fire practise builds and classroom training doesn’t.

You choose a persona, engage in real-time voice conversation, and receive an AI-generated verdict.

What if it didn't care about you at all?

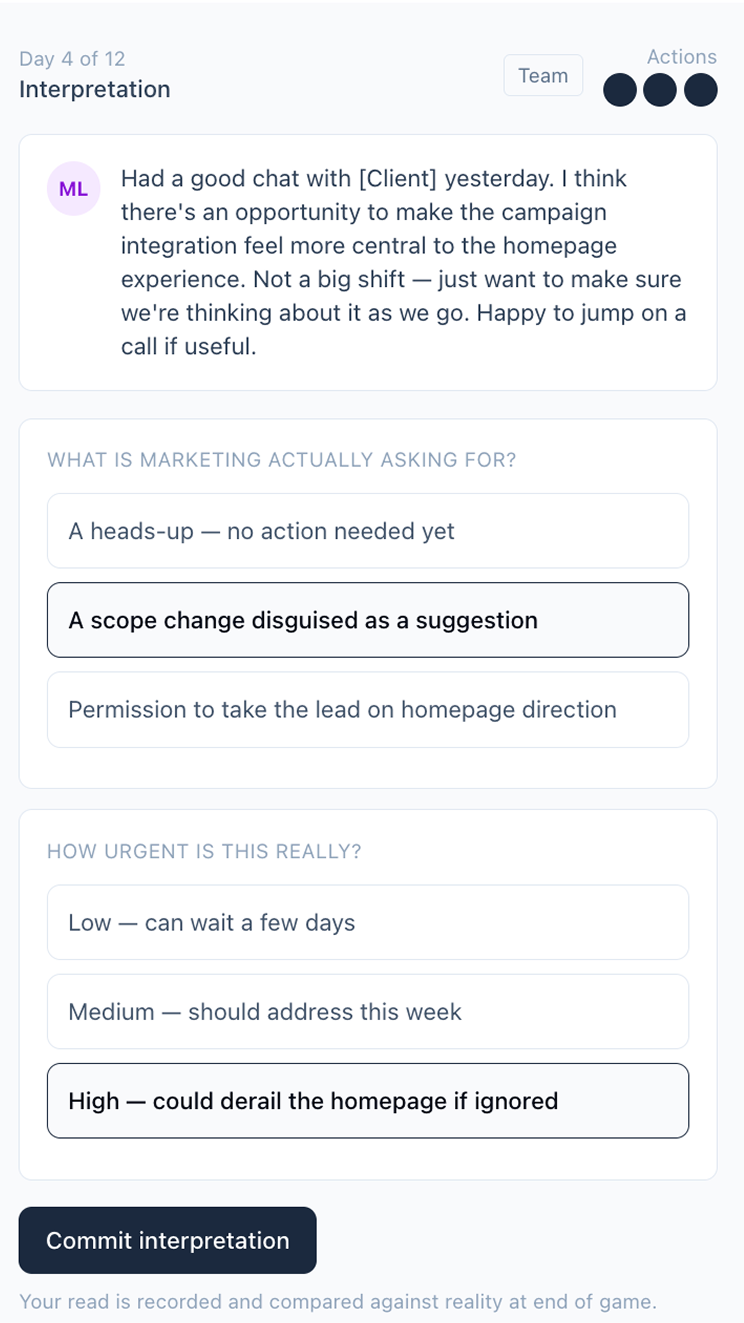

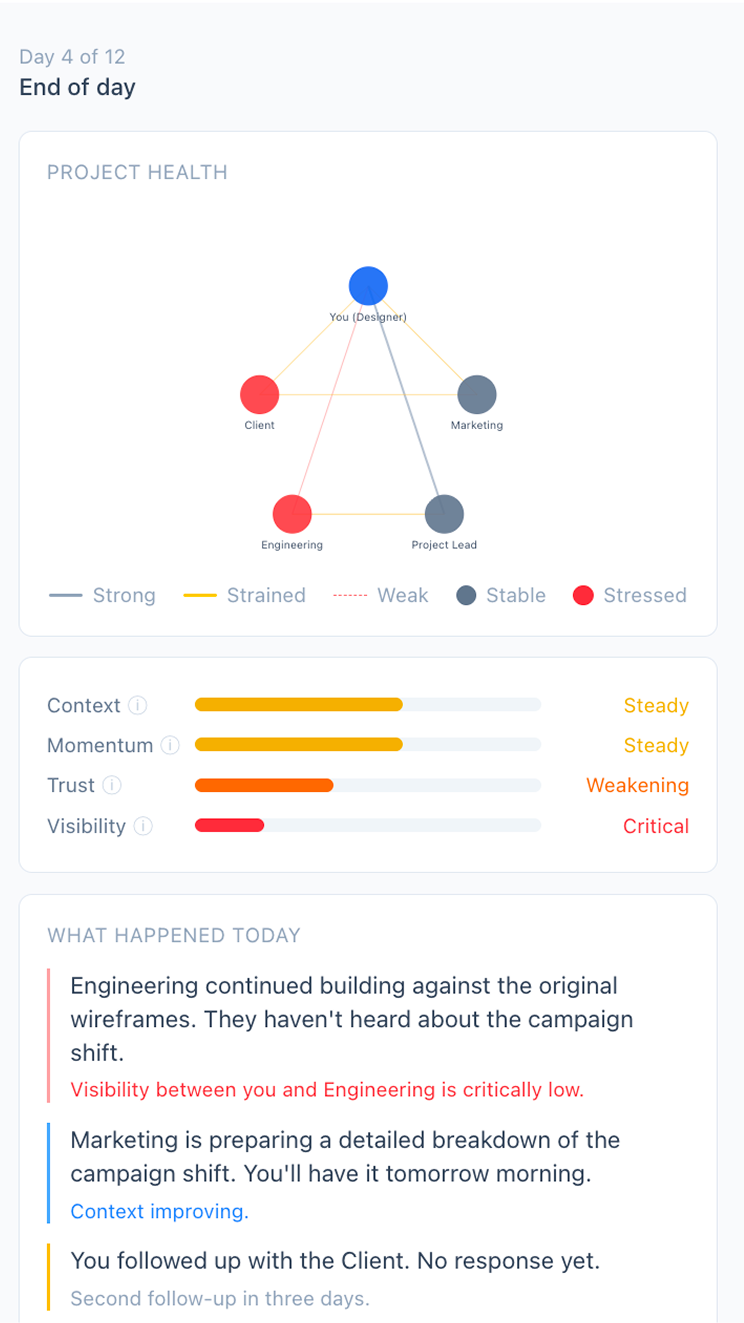

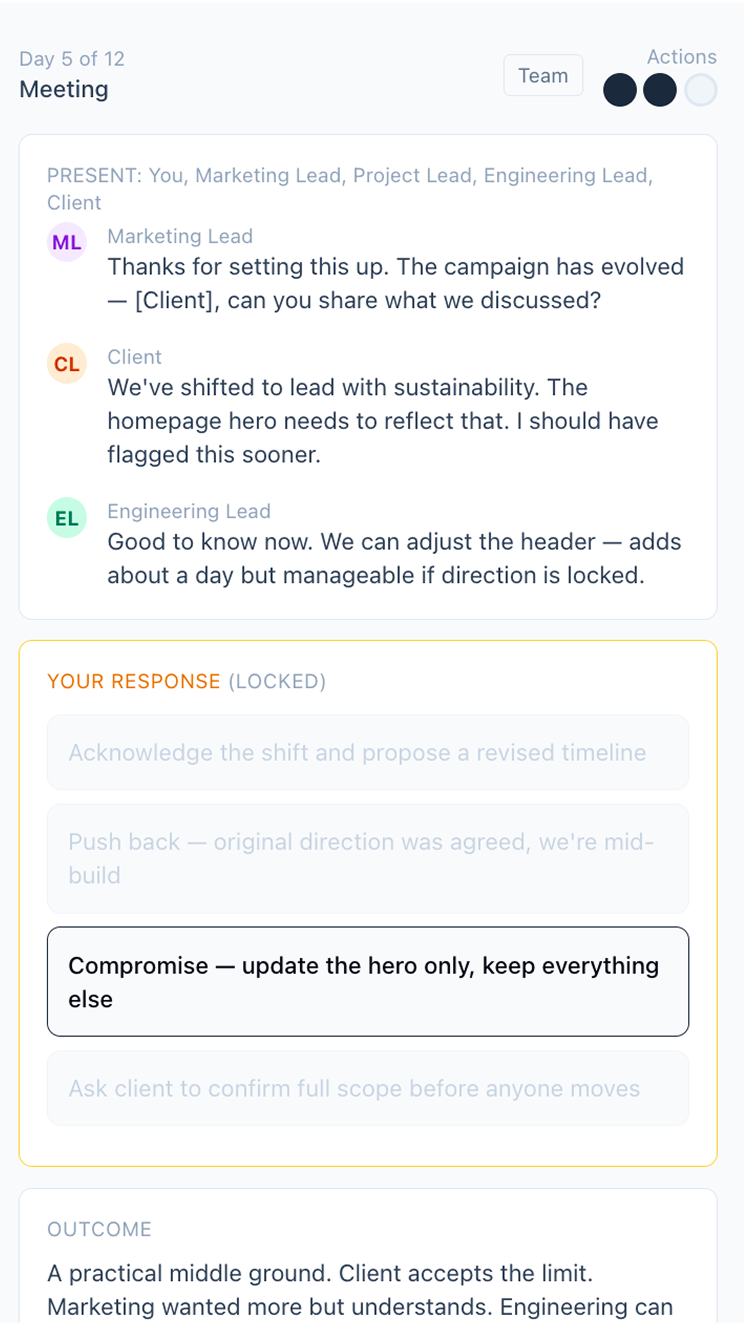

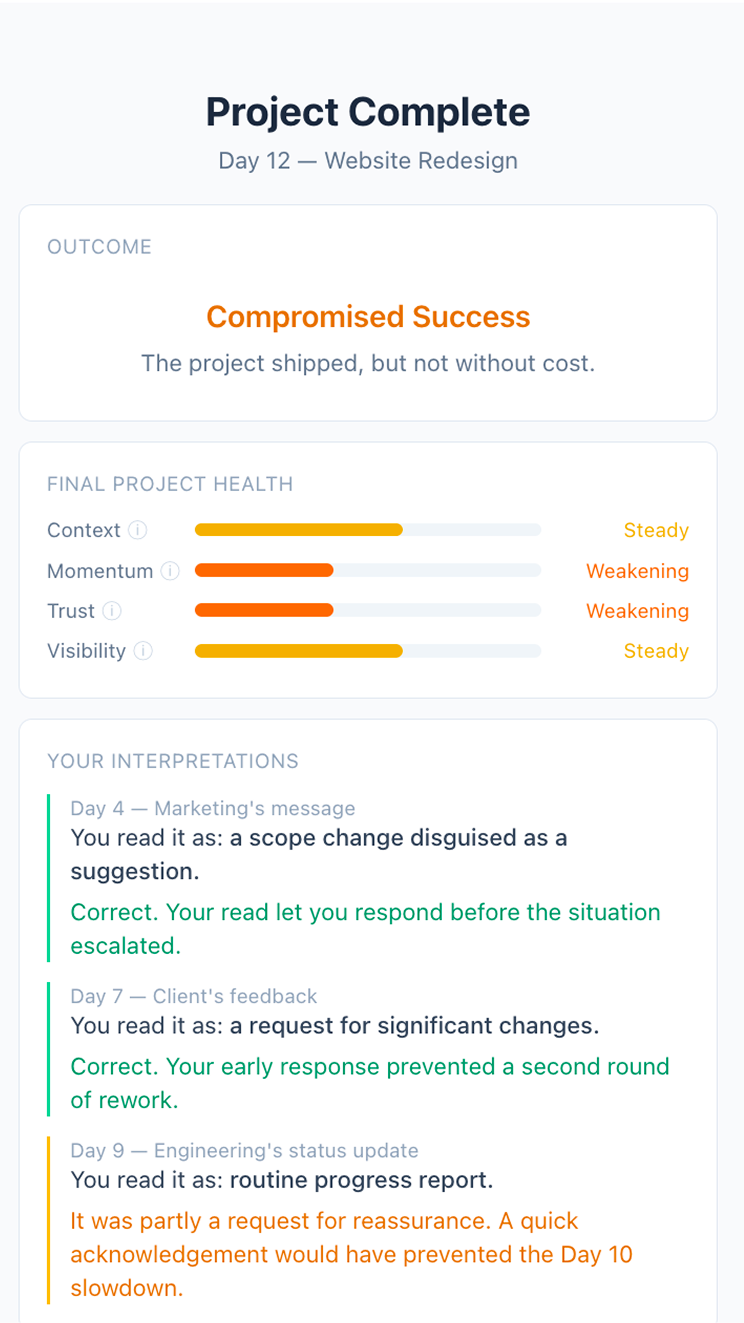

Living Project is a single-player collaboration simulation where you manage a project that’s quietly falling apart. Each day you scan for signals, interpret ambiguous messages from stakeholders who have their own goals and personalities, and spend a limited number of action points — clarifying, summarising, following up, calling meetings — trying to keep everyone aligned before context decays, decisions destabilise, and assumptions harden into real problems.

The people around you don’t wait for you: they talk to each other, forget what was agreed, push their own agendas, and drift out of sync while you’re focused elsewhere. The game runs for 12 days, each taking about two minutes, and at the end you find out where your reading of the situation was right, where it was wrong, and what was happening behind the scenes that you never saw.

It’s not about completing tasks. It’s about maintaining shared understanding across a group of people who are all, slowly, drifting apart. The AI doesn’t care if you succeed. That’s the point.

The game runs for 12 days, each taking about two minutes.

Some of the best AI learning tools are the ones that make you do the work yourself. The AI’s job isn’t to know more than you. It’s to put you in a situation where you have to figure it out.

If you want a demo of any of these experiments, or want to talk about how we can help with your own, get in touch.